A prototype computer that mimics the analog physical computational dynamics of the brain could lead to more efficient and more powerful problem-solving platforms.

Using off-the-shelf electronic components, a Tsinghua University-led research team has built a complete prototype ‘reservoir’ computer as a low-power, high-speed alternative to today’s binary-based computer systems. The research, published in Nature Communications1, demonstrates the potential of analog-based brain-mimicking hardware architecture for solving complex problems and efficiently training neural networks.

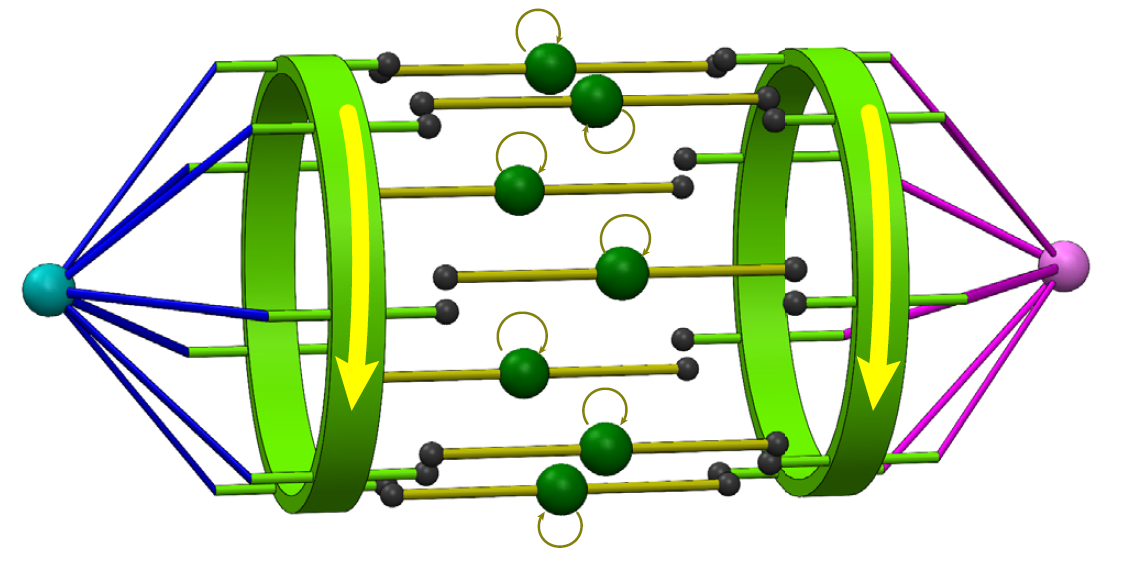

Rotating neurons (green) provide a cyclic reservoir that processes inputs (blue) to be read out as linearly classified outputs (pink).

The promise of reservoir computing

Today's computers are based on the processing of binary data – 0s and 1s – through complex logic networks. The brain, however, offers an enticingly different and promising computing principle. It operates on many different inputs at once, with each neuron connected to many others in a time-varying cascading network – a highly efficient biological computer that operates not on 1s and 0s, but in a non-linear analog domain. Mathematically, this could be modelled as a ‘reservoir’ computer, as He Qian from the Beijing National Research Center for Information Science and Technology (BNRist) at Tsinghua University, explains.

“Reservoir computing is a form of neuromorphic computing that was first proposed in the 2000s,” says Qian. “It can be most easily understood using a lake analogy. If you throw stones into a lake, the ripples from each stone interact to produce a complex ripple pattern on the water surface as a fading memory containing the information about your stone-throwing activity. By analysing the ripples, it is possible to understand how many stones you threw, the time intervals between them, and even how big each stone was. The lake is a ‘reservoir’, and the ripple pattern is the reservoir’s state matrix. Similar processes, including high-dimensional mapping and readout, have been recently found in the mouse brain, which suggests our brain might operate as a complex reservoir computing system as well.”

Since the idea was proposed, researchers have been studying different ways to implement such a computing system in hardware, but so far such experiments have required elaborate input and readout systems. Qian and Huaqiang Wu from BNRist with a group of colleagues have now designed, simulated and constructed an electronics-based reservoir computing system with integrated input and readout capability that demonstrates the promise of this approach for powerful, very low energy computing.

Electronic implementation of a rotating neuron reservoir as a stack of eight 8-neuron circuits.

A benchtop brain

Using a stack of electronic circuits including a readout module consisting of an array of memristors — resistive switching elements that‘remember’the amount of charge that has flowed through them — the team constructed a circuit modelling a reservoir of rotating neurons that respond to inputs in a sequential and interconnected way.

“Our main challenge was to find an equivalent pairing of a neural network algorithm and hardware that could be implemented,” says Qian. “In this case, the electronic rotational hardware has a similar function to the ‘lake’ reservoir, mapping low-dimensional inputs to a higher-dimensional space that can be linearly separated using a simple linear classifier.”

The team’s device achieves all-analog signal processing with extremely low power, three orders of magnitude lower than any other reservoir computing system, and was used to accurately predict the future sequence of a chaotic time series as a potential sensor application.

“There is more to electronics than the binary transistor,” Qian says. “There remains much to be explored in the rich dynamics offered by electronics for neuromorphic reservoir computing, which is particularly interesting for brain-inspired computing and artificial intelligence due to its low training complexity and cost.”

References

[1] Liang, X. et al. Rotating neurons for all-analog implementation of cyclic reservoir computing. Nature Communications 13:1549 (2022).

Editor: Guo Lili